i. Overview

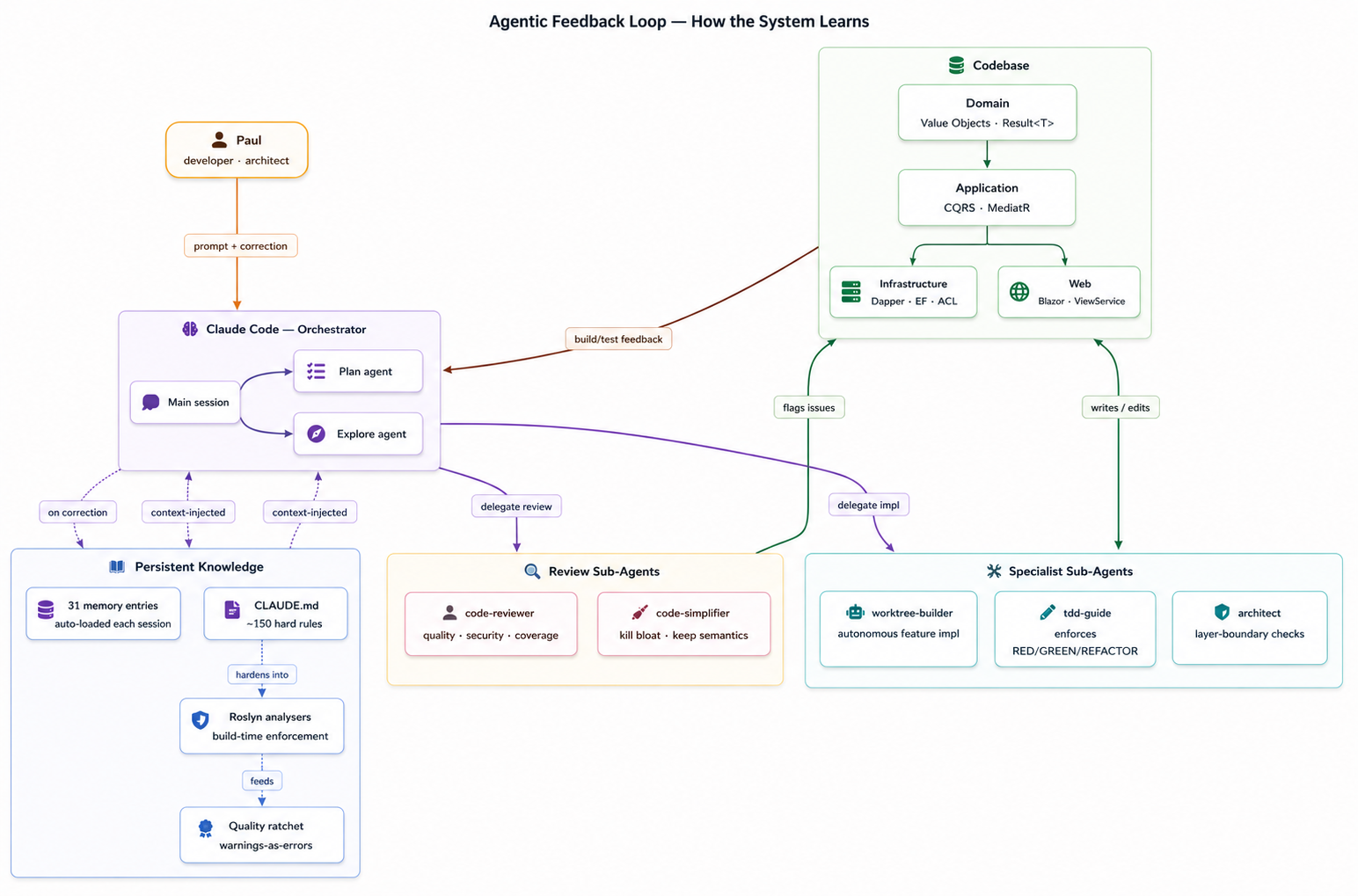

The defining feature of the project was not the target stack. It was the development model. Codebase and agent behaviour evolved together: every correction became a persistent memory, every architectural rule became a build-failing analyser, every recurring error became a guard test or a CLAUDE.md line.

The work averaged 10+ commits a day, with at least 3 Claude Code sessions running in parallel — and often 10+ on git worktrees. Loo van Eck, 2026.

ii. Learning to Delegate

I have always been pedantic about my code and design decisions. On a project this size, holding on to that level of control would have made the project impossible.

The maths of agentic coding was unforgiving. To get real leverage I had to let multiple Claude Code sessions run in parallel against a production codebase, and not be reading every line. What got me past that resistance was other people: Amsterdam AI meetups, hackathon weekends, evenings in community sessions with engineers a few weeks ahead of me on the same curve. Each had hit the same wall and worked out a piece of the answer.

The guardrails came first, before any new code. I spent the opening week extracting the legacy app’s behaviour into BDD scenarios, each paired with a Playwright screenshot, and walked users through the document until they signed off on what the system actually did. From there I built an end-to-end test suite against the legacy code itself, and those tests became the guiding principles for the tests written first for the new app. The coding agents weren’t just allowed to run free. The new code grew inside a harness that already knew what it should do.

iii. Agent Orchestration

The context layer that let multiple Claude Code agents work coherently against the same codebase:

- CLAUDE.md as the canonical rules document, with six markdown sub-agent definitions (worktree-builder, code-reviewer, simplify, Plan, Explore, general-purpose) composed into a feature pipeline.

- Custom slash-command suite (

/review,/simplify,/loop,/handoff,/mint-url, plus the fullspeckit.*family) for repeatable, named operations rather than ad-hoc prompting. - Adopted the context-mode plugin (

ctx) for scoped context loading, which kept agent attention on the right files without bloating the prompt. - Persistent memory captured every workflow correction, project decision and reference note so the next session inherited the rule.

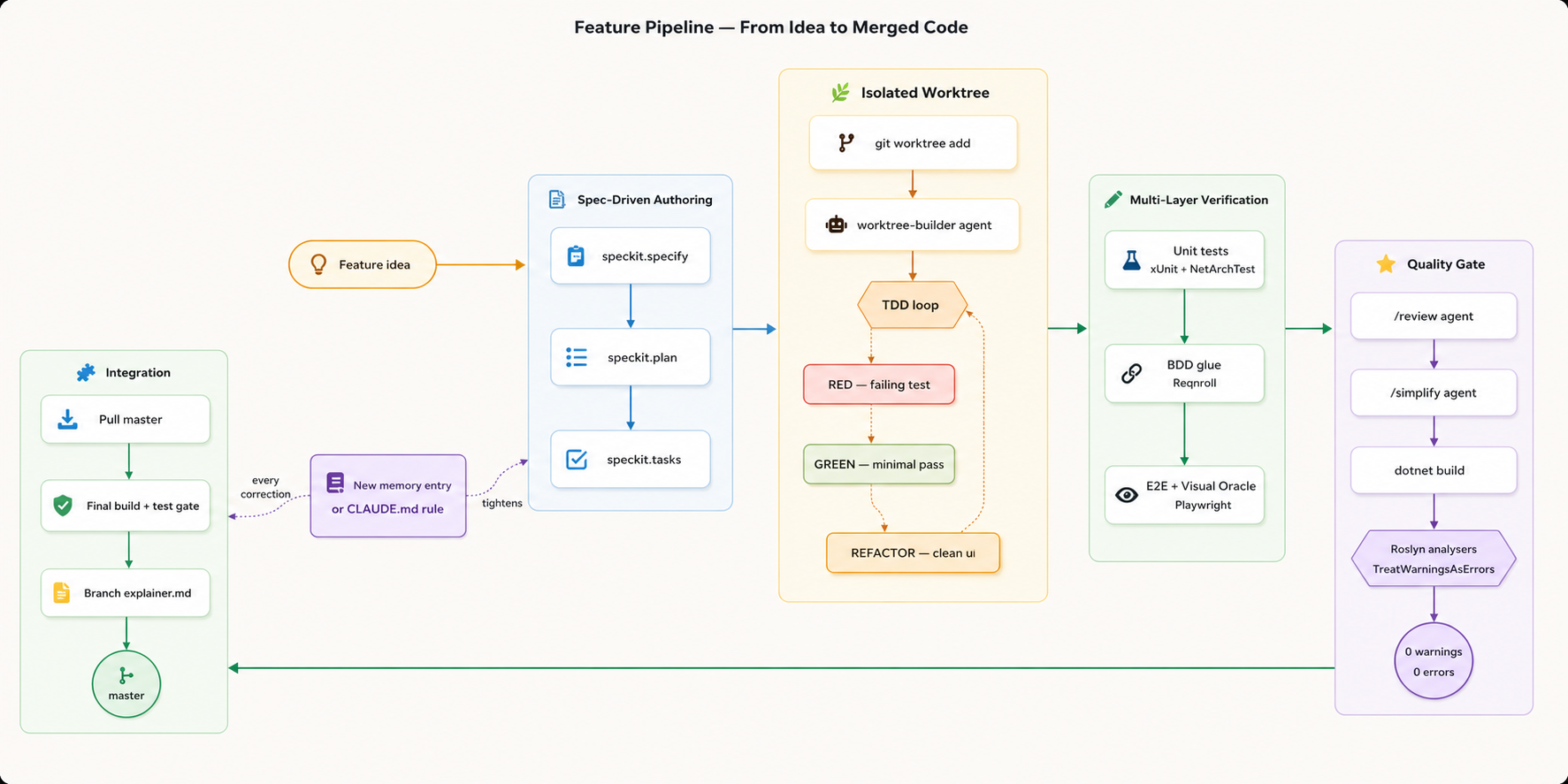

iv. Spec-Driven Parallel Execution

Every feature began as a written specification, not a prompt, generated using Spec Kit:

- Specifications authored through spec → plan → tasks → implement before any production code was written for the slice.

- Worktree-per-feature: each spec lived in its own git worktree with a dedicated builder agent, allowing multiple specs to be implemented in parallel without merge collisions.

- BDD scenarios in Reqnroll as the executable contract between spec and code.

.featurefiles drove Playwright through a glue layer; a feature was not “done” until its scenarios passed. - Conventional Commits and a mandatory build+test gate after every master merge — the rule went into CLAUDE.md.

v. Oracle Testing Against the Legacy

Specs said what the new system should do. The legacy app was the source of truth for what it actually did:

- Built purpose-specific applications that probed the legacy WebForms system as a black-box behavioural oracle, then wrapped those tools as Claude Code skills so any agent could call them inside its loop.

- Visual Oracle Pattern: Playwright captured screenshots of the new Blazor output and pixel-compared them against legacy screenshots. Behavioural parity was measured, not assumed.

- Testcontainers + Podman stood up real SQL Server instances for integration tests; the same Dapper / EF Core code paths exercised in production were exercised in CI.

- Hard rule: domain entity

Createmethods never invented validation rules absent from the legacy app. The legacy was authoritative, even when its rules were ugly.

vi. Compiler-Enforced Guardrails

Agents were fast but drifted. The fix was not more prompting — it was build-time enforcement.

- TreatWarningsAsErrors globally: a single yellow squiggly failed the build. The ratchet only went one way.

- A suite of custom Roslyn analysers turned house style into compile errors. No primitives in Domain APIs (Value Objects required). No

var(explicit types only). NoRepository/Database/Dapper/EFin port names. Bans on ambient statics and implementation-revealing fully-qualified names. - NetArchTest enforced hexagonal layer dependencies: Razor could not reach into Application or Domain, Domain had no infrastructure imports, the Anti-Corruption Layer was the only path between external schemas and domain types.

- Combined with off-the-shelf analysers and a pre-commit hook running

dotnet build, an agent could not quietly skip a step. If it forgot, the build failed and the worktree refused to commit.

vii. Hexagonal Architecture & CQRS

The architecture was what made the rebuild tractable:

- Hexagonal arrangement: ports & adapters with strict layer policing, MediatR-based CQRS (one handler per command/query),

Result<T>for explicit failure modes, and a Web-layer ViewService Facade between every Razor component and the Application layer. - Anti-Corruption Layer as

ExternalRow → Translator → Domain. The legacy database schema never leaked past Infrastructure, so the new domain model was free to be clean. - Dapper for legacy stored-procedure adapters, EF Core for the internal database, SQL Server FileTable for the editor image library, Polly for retry and circuit-breaker on the external DB connection.

viii. Key Contributions

- Designed the hexagonal architecture and the agent collaboration model that produced it.

- Built the worktree-per-feature parallel execution workflow that let one engineer ship at multi-engineer pace.

- Authored the SpecKit specifications driving the migration end to end.

- Wrote the custom Roslyn analyser suite that turned architectural intent into build-time enforcement.

- Established the Visual Oracle Pattern and the legacy-probing skills that gave the migration measurable behavioural parity.

- Captured the persistent memory rules so the next session started smarter than the last.

- Delivered the rebuild in 6 weeks against a prior estimate of more than twice that.